This installment we will cover what connects the controller to the computer.

Disk controllers use a system bus to talk to your CPU and memory. It also determines the maximum speed your disk can talk to the computer. There may be as many as six different system busses in your computer. We are only interested in the ones that directly connect your disk controllers.

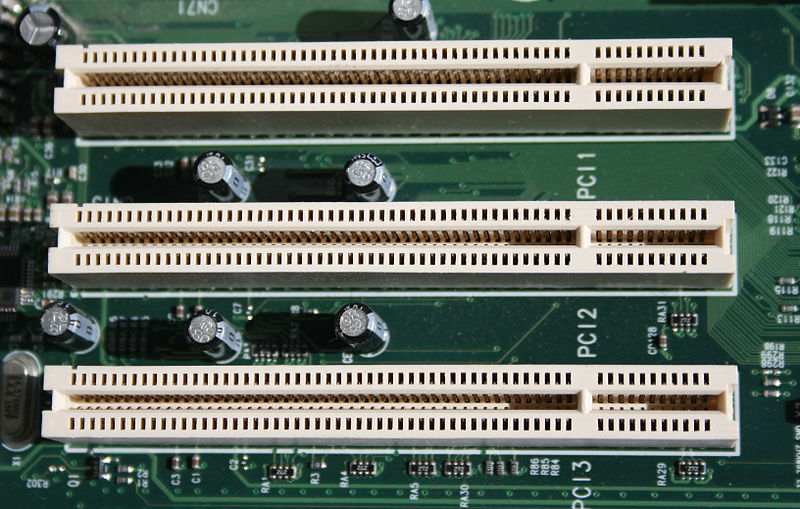

The oldest bus still in general use is PCI. You can still find them in your desktop and in servers though it is really on the way out. We are only covering PCI 2.0 32 bits wide running at 33 MHz. This allows for a theoretical top speed of 133.33 MB/Sec. In reality after overhead and other limitations you end up around 86 MB/Sec throughput. A single modern disk can achieve this speed. You generally don’t see PCI disk controllers with more than 4 ports. Adding more disk controllers to a system may not yield a direct increase in performance. Even if you have multiple PCI slots, they may only actually run through a single PCI bus. Limiting your bandwidth to the system to 133.33 MB/Sec.

Credit:Jonathan Zander

IBM, HP, and Compaq came together to standardize a faster bus for servers, specifically for disk controllers and network interface cards. PCI-X build on the PCI standard and was backwards compatible. It extended the PCI bus to 64 bits wide and a speed of 66 MHz in its initial launch. We go from 133.33 MB/Sec to 533.3 MB/Sec, a 4x improvement. The next generation brought us two more implementations, PCI-X 64 bit/100 MHz and PCI-X 64 bit/133 MHz at 800 MB/Sec and 1067 MHz respectively. This was a major step up but had several flaws. The physical size of the connector was huge. It also carried over the shortcomings of PCI. Signal noise across slots, errors could be caused by having several cards next to each other. Communication was half-duplex bidirectional, It couldn’t send and receive data at the same time. You are only as fast as the slowest card on the bus, If you had a 66 MHz card your 133 MHz card was reduced to match.

Yikes!

Credit: Snickerdo

We have moved on to a completely new standard, PCI Express (PCIe). Some people confuse PCI-X with PCIe, but they are completely different. The new PCIe standard was introduced in 2004 and was quickly adopted in main stream computers for video cards, but it is a general system bus. There are several key differences between PCIe and the buses that came before it. It is a fully serial and bidirectional bus, you can have multiple cards at multiple speeds reading and writing data at the same time. It also introduced the concept of lanes. PCIe card will use between 1 to 16 lanes. Each lane in the 1.0 specification was rated at 250 MB/sec. The 2.0 specification introduced in 2007 doubled that to 500 MB/Sec. In 2011 the 3.0 Specification will double that again to 1 GB/Sec. PCIe is also rated by how many transfers a second it can handle. Measuring transfers in Gigatransfers or Megatransfers has been around for a while, though not commonly used. See Gigatransfers at Wikipedia for a better explanation. One thing to be aware of the 1.0 and 2.0 standard loose speed due to the way data is encoded on the bus. Like the PCI bus, the 250 MB/Sec is a maximum you won’t see in the real world. You will loose about 20%. The 3.0 specification reduces that to around 1.5%. The most common sizing of PCIe slots is 1x, 4x, 8x, and 16x. It is also downwards compatible so a 1x, 4x, and 8x card will all work in a 16x slot. Just because a card is physically a 16x it may be a 8x or slower internally. That applies for the slot as well, it may be a 16x slot but only operate at 8x speeds.

Credit: Snickerdo

PCI Express slots (from top to bottom: x4, x16, x1 and x16), compared to a traditional 32-bit PCI slot (bottom).

So, what does all this mean? If you have an older server, don’t use the PCI slots. Be careful with the PCI-X cards and placement. If you have PCIe you need to know if it is a 1.0 or 2.0 capable and what speed the physical connectors actually operate at.